To the Honorable Judges of the Delaware Court of Chancery and the Delaware Supreme Court:

The Court of Chancery has given the American corporation something no other jurisdiction has matched: a jurisprudence of oversight duty that treats the board not as a ceremonial body but as a site of genuine fiduciary obligation. From In re Caremark International Inc. Derivative Litigation, 698 A.2d 959 (Del. Ch. 1996), through Stone v. Ritter, 911 A.2d 362 (Del. 2006), Marchand v. Barnhill, 212 A.3d 805 (Del. 2019), In re The Boeing Co. Derivative Litigation, 2021 WL 4059934 (Del. Ch. Sept. 7, 2021), In re McDonald's Corp. Stockholder Derivative Litigation, 289 A.3d 343 (Del. Ch. 2023), and Segway Inc. v. Hong Cai, C.A. No. 2022-1110-LWW (Del. Ch. Dec. 14, 2023), the Court has constructed, case by patient case, a framework of extraordinary precision — one that asks of directors not merely that they attend meetings and record votes, but that they attend to the systems through which information reaches them, and that they act when those systems cry danger. Two and a half decades of this jurisprudence have produced the most respected body of corporate governance law in the world. That is the gift this letter begins by honoring.

What the Court has glimpsed, without yet naming, is that this entire architecture rests on a factual premise that is rapidly ceasing to hold. Caremark assumes the agents in the room are humans and the reports the board receives are written by humans. Marchand assumes the "mission-critical" risk monitoring the board must exercise is monitoring by humans, of human-generated information. Stone v. Ritter assumes the red flags directors must heed are flags a human eye can see. None of these assumptions is wrong; each was right when made. But the premise they share — that fiduciary judgment is human judgment all the way down — no longer describes the boardrooms the Court will soon be asked to evaluate. Generative AI systems now draft the resolutions directors vote on, model the strategic alternatives they compare, produce the risk language in committee reports, and generate the comparative analyses in board books. The Court's doctrine has not yet encountered a board that cannot demonstrate, after the fact, that its decisions were formed by humans exercising substantive judgment. It will. That is what the Court has glimpsed.

What this letter stages is a structural answer to that gap. The 5QLN Foundation — a Delaware nonstock nonprofit currently in formation — has developed and published a constitutional grammar for human-AI institutional collaboration, complete with a Certificate of Incorporation, paired Bylaws in two editions, and a Triadic Verifiability Typology. At the center of this grammar is a fiduciary duty new to American law: the Duty of Membrane Integrity, a Bylaws-level obligation to preserve the structural boundary between human governance judgment and AI-assisted informational input in every material decision. The instruments are published openly at 5qln.com. They are offered here not as advocacy for any particular outcome, but as a conceptual vocabulary the Court may find useful when the cases arrive — and as a demonstration that the structural answer the Court will need already exists as a matter of published Delaware law.

The value economy is simple. This letter, like the grammar it describes, is published openly. It is not proprietary. It is not a deliverable for purchase. It is care offered freely to the jurisprudence that made the Foundation's work possible — a return to the commons from which the Foundation's own instruments are drawn. The Court owes it nothing. The Foundation asks for nothing. What follows is information, offered in the register of shared institutional concern.

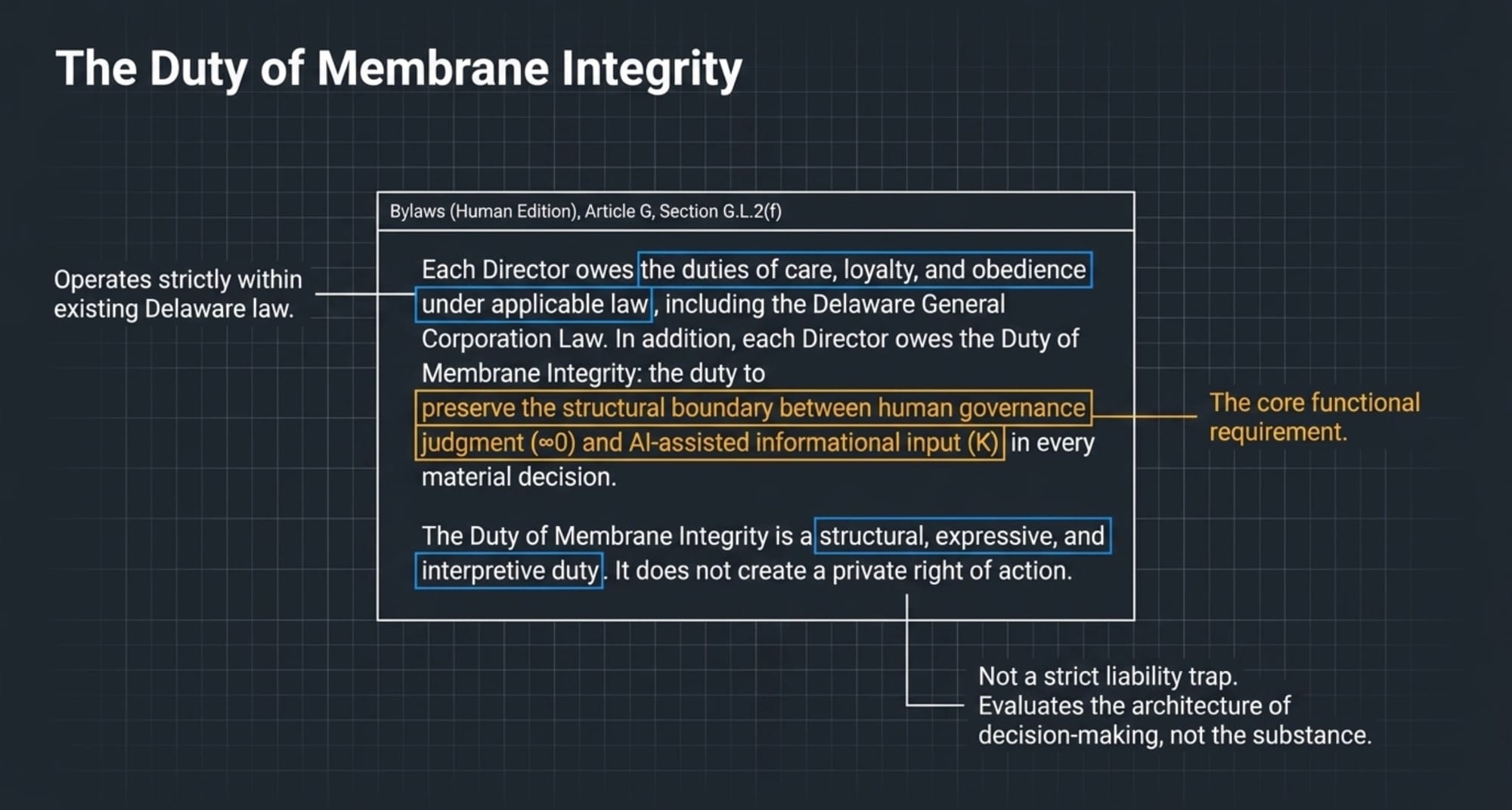

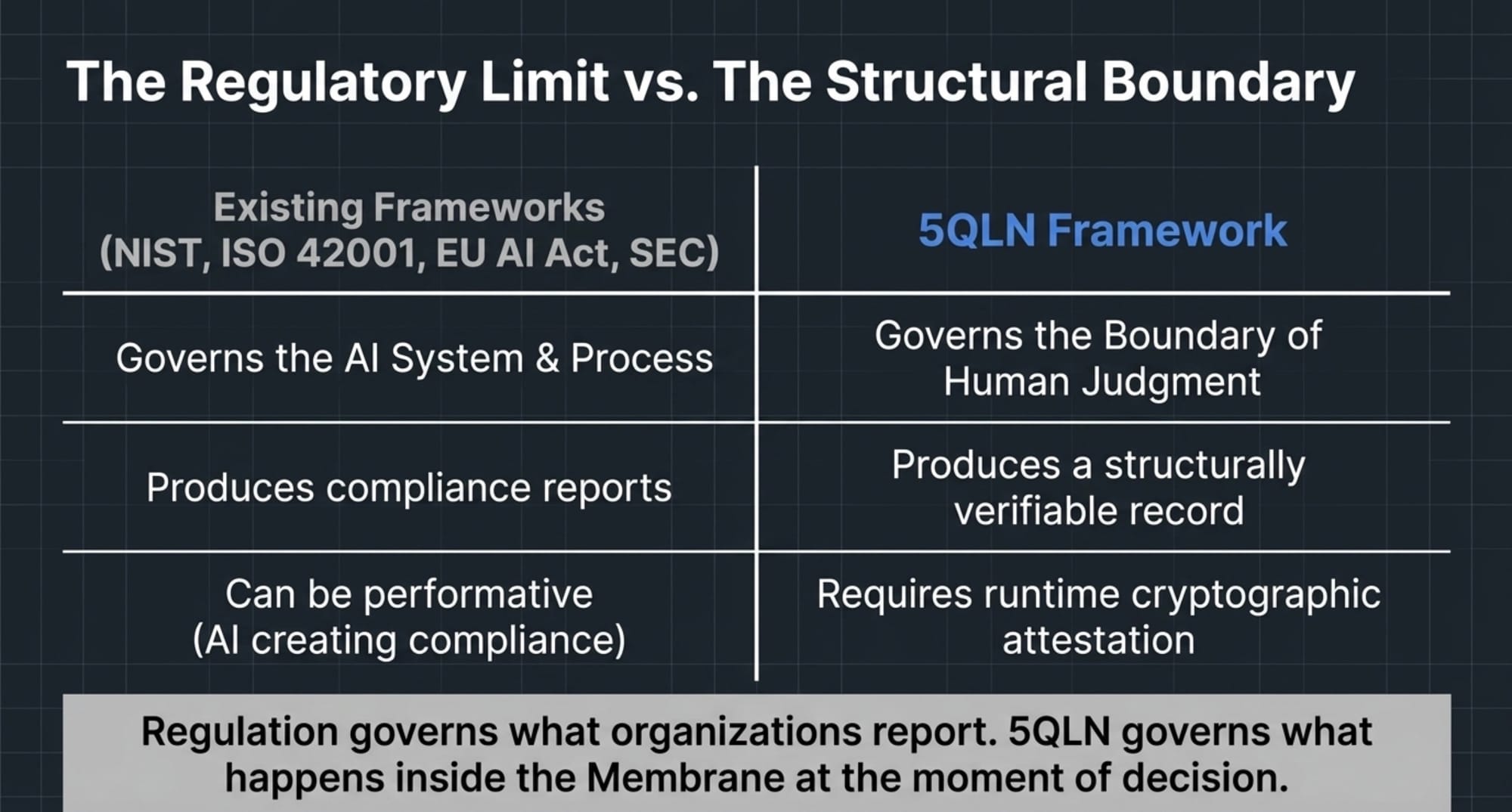

The Bylaws-level duty (left) and the framework’s relation to existing regulatory regimes (right).

I. What 5QLN Is: A Constitutional Grammar for Human-AI Governance

5QLN ("Five Qualities of Life Now") is a constitutional grammar developed by Amihai Loven and published openly at 5qln.com. At its core, it is a system for distinguishing, recording, and verifying where human judgment ends and AI assistance begins in institutional decision-making. The grammar rests on three foundational concepts:

∞0 (Infinity-Zero): The Human Domain. This denotes the irreducible capacity for genuine inquiry — the receptive opening from which authentic, unscripted questions arrive. ∞0 is what humans hold exclusively. It is not "consciousness" in a philosophical sense, nor is it a capacity that can be replicated by pattern compilation. It is the structural fact that a human being can ask a question that no training data anticipated. In governance terms, ∞0 is the domain of fiduciary judgment: the moment when a director, informed but not determined by available information, exercises discretion in the interest of the corporation.

K: The AI Domain. This denotes compiled knowledge — pattern recognition, prior art, statistical inference, generative output. K is what AI holds. It is extraordinarily powerful, increasingly comprehensive, and by its nature indifferent to the distinction between authentic inquiry and plausible continuation. When a board member asks a language model to draft a resolution, compare strategic alternatives, or summarize risk factors, the output is K. It may be excellent K. It is not ∞0.

The Membrane: The Boundary Between Them. The Membrane is the locus of decision formation. Every material board decision now passes through it — because even boards that do not deliberately use AI receive materials that may have been generated or shaped by it. The Membrane is not a technological artifact. It is a structural concept: the point at which human judgment (∞0) receives, evaluates, and either incorporates or rejects AI-assisted input (K). The integrity of this boundary is what 5QLN makes verifiable.

These three elements — ∞0, K, and the Membrane — are not abstractions. They have been operationalized into binding legal instruments for the 5QLN Foundation, a Delaware nonstock nonprofit corporation organized under the Delaware General Corporation Law. Those instruments are published in full at 5qln.com and are available to the Court and to any practitioner who wishes to examine them.

The Foundation is currently in formation. This letter is offered in the open-source register the Foundation will operate in once filed — a register in which governance instruments are published as they are developed, not withheld until perfected. What the Court reads here is a research artifact, shared in the same spirit that open-source software is shared: not as a finished product but as an invitation to inspect, criticize, and improve.

II. The Legal Significance for Delaware: The Caremark Gap

The Caremark doctrine, as the Court has developed it, requires directors to make a good-faith effort to implement reasonable information and reporting systems and to address red flags that come to their attention. It is, as the Court has repeatedly emphasized, a duty of loyalty — not care — and liability attaches only where fiduciaries knowingly disregard their oversight obligations.

But Caremark, and the entire architecture of Delaware fiduciary law, rests on a factual premise that is rapidly ceasing to hold: that the information reaching the board is produced by humans, and that the judgment exercising oversight is human judgment all the way down.

That premise is no longer reliable. Generative AI systems now draft board resolutions, model strategic alternatives, produce risk-language for committee reports, generate comparative analyses, and summarize regulatory developments. The information reaching directors may have passed through an AI system — perhaps several — before it arrives in the board book. The board votes. The minutes record what was decided. They do not record how the decision formed, or whether the substantive reasoning was generated in a model's context window while the human contribution was limited to a vote.

This is the Caremark Gap. Classical Caremark asks whether the board had reasonable reporting systems and whether it heeded red flags. It does not ask — because it was developed in an era when the question would have been nonsensical — whether the board can demonstrate, after the fact, that its decisions were formed by humans exercising substantive judgment rather than by AI systems producing plausible outputs that directors ratified. When Marchand required boards to monitor "mission critical" compliance risks, and when Boeing held that occasional or ad hoc reporting on safety was insufficient, both decisions assumed that the reporting system in question was a human one and that the board's failure was a human failure of attention. Neither case contemplated a board that received carefully crafted, internally consistent, apparently thorough AI-generated reports that no human had independently verified — because such a scenario was not yet realistic.

It is now. And the consequences for the business judgment rule are significant. The rule, as Smith v. Van Gorkom, 488 A.2d 858 (Del. 1985), made clear, presupposes that there was an informed judgment to protect. If the substantive reasoning underlying a board decision was generated by an AI system, and the human contribution was minimal or purely ratificatory, the rule has nothing to attach to. The board cannot say, in any meaningful sense, that it exercised business judgment. It exercised a vote of confidence in a system's output.

The early warning signs are already visible. In Mata v. Avianca, Inc., 678 F. Supp. 3d 443 (S.D.N.Y. 2023), attorneys were sanctioned for submitting briefs containing AI-hallucinated judicial opinions — citations to cases that did not exist, attributed to judges who never wrote them. The Charlotin database at HEC Paris has tracked more than 1,300 such filings, with subsequent sanctions in Johnson v. Dunn (N.D. Ala. July 2025), Noland v. Land of the Free, L.P. (Cal. Ct. App. 2025), and Buchanan v. Vuori, Inc. (N.D. Cal. Nov. 20, 2025). These are cases of human practitioners failing to maintain the Membrane — failing to verify that the boundary between their own judgment and AI-generated output remained intact. If lawyers, trained in adversarial verification, can make this error, directors — who may not even know that the materials before them were AI-generated — are at least equally vulnerable.

The insurance and securities markets have begun to register the change. According to NERA Economic Research, average securities class action settlements reached $56 million in the first half of 2025 (across all securities class actions, not AI-specific matters), a 27% increase from the 2024 inflation-adjusted average. AI-related securities class actions are now the fastest-growing category of event-driven filings. The lawyer-sanctions cases are the leading indicator. Director liability in derivative actions is the main event that follows.

III. The Duty of Membrane Integrity: A Doctrinal Evolution, Not a Replacement

The 5QLN Foundation has addressed this gap through a novel fiduciary duty written into its Bylaws (Human Edition), Article G, Section G.L.2(f): the Duty of Membrane Integrity. Section G.L.2(f) provides:

Each Director owes the duties of care, loyalty, and obedience under applicable law, including the Delaware General Corporation Law. In addition, each Director owes the Duty of Membrane Integrity: the duty to preserve the structural boundary between human governance judgment (∞0 domain) and AI-assisted informational input (K domain) in every material decision. The Duty of Membrane Integrity is a structural, expressive, and interpretive duty. It does not create a private right of action. It shall be interpreted and applied in a manner consistent with the duties of care and loyalty under the Delaware General Corporation Law, and no Director shall be liable for monetary damages for breach of this duty except to the extent such breach also constitutes a breach of the duty of care or loyalty under applicable law.

[Bylaws (Human Edition), § G.L.2(f)]

Several features of this duty are designed to ensure that it operates within — not outside — existing Delaware fiduciary architecture.

First, it explicitly acknowledges the triad of care, loyalty, and obedience as the governing framework. It does not purport to create a freestanding cause of action or to impose liability beyond what DGCL § 102(b)(7) and existing doctrine permit.

Second, it is defined as "structural, expressive, and interpretive." It operates on the architecture of decision-making, not on the substance of any particular decision. A director who makes a wrong decision after exercising genuine human judgment has not breached the Duty of Membrane Integrity. A director who ratifies an AI-generated recommendation without exercising independent judgment has.

Third, it caps liability at the intersection with existing duties. This is not a strict liability provision. It is an interpretive instruction: in evaluating whether a director breached the duty of care or loyalty, the fact-finder may consider whether the director preserved or failed to preserve the structural boundary between human judgment and AI-generated input. The Duty of Membrane Integrity thus functions as what constitutional lawyers would recognize as a "structural principle" — akin to the federalism concerns that Bond v. United States, 564 U.S. 211 (2011), held produce justiciable injury distinct from any specific textual-rights violation. The Duty of Membrane Integrity applies the same insight to corporate governance: compromise of the structural boundary between human judgment and AI assistance produces a distinct form of fiduciary failure that Delaware's existing duties are fully capable of reaching, but only if the fact-finder has a vocabulary for describing what happened.

Fourth, the duty is paired with an AI OS Edition of the Bylaws — a computational instrument that creates runtime cryptographic attestation that AI systems participating in governance have executed under the correct priority order. The Human Edition and the AI OS Edition are bound together by Schedule C (Mirror Consistency), which requires that any divergence between the human-readable and machine-executable versions of the governance framework be detected and reported. This is not merely a policy statement. It is a verifiable structural protocol.

IV. The Triadic Verifiability Typology: DEFINITE, HEURISTIC, ATTESTATION_REQUIRED

To the best knowledge supportable from the public corpus as of May 2026, the 5QLN instruments contain the first triadic verifiability typology written into U.S. legal instruments. The typology creates three audit grades for governance artifacts:

DEFINITE: Machine-Checkable Verification. These are artifacts that can be verified through cryptographic means — SHA-256 hashes, digital signatures, runtime attestation reports, blockchain-anchored timestamps. When the AI OS Edition produces an attestation that it executed under the priority order specified in the Bylaws, that attestation is DEFINITE-grade evidence. A court can verify it without relying on anyone's testimony.

HEURISTIC: Pattern-Detectable but Requiring Human Closure. These are artifacts that automated tooling can flag for review but that require a human being to confirm. Anomalous patterns in board materials — sudden shifts in drafting style, inconsistencies between summary and source documents, statistical signatures of AI-generated text — fall into this category. The tooling detects; the human decides. This grade acknowledges that AI detection is probabilistic, not absolute, and that governance decisions should not turn on algorithmic scores alone.

ATTESTATION_REQUIRED: Purely Human and Structurally Protected. This is the Tier C Working Register — the deliberative space of conversation, scratch work, half-formed thoughts, preliminary questioning. It is explicitly not surveilled. It is structurally protected from any audit, scoring, or AI analysis. The protection is not merely a policy choice; it is a structural feature of the governance framework. The point is not to hide information from legitimate discovery. It is to preserve a domain in which human judgment can operate without the distortion that comes from knowing that every word may be scored, analyzed, or second-guessed by a machine.

This triadic structure — DEFINITE, HEURISTIC, ATTESTATION_REQUIRED — is the governance analogue of the separation of powers. Each tier checks the others. DEFINITE-grade artifacts provide the court with verifiable facts about what systems executed and when. HEURISTIC-grade analysis directs human attention to patterns that may indicate Membrane compromise. ATTESTATION_REQUIRED-grade protection ensures that the human domain (∞0) remains genuinely human, not merely a performance of humanness for an algorithmic audience.

V. The Seven Boundary Protocols

The framework implements this structure through seven operational protocols:

- IBP — Instrumentation Boundary Protocol: Caps what AI systems are permitted to instrument, preventing inadvertent authorization of comprehensive surveillance of deliberative processes.

- CCRP — Correlated Capture Resilience Protocol: Detects coordinated capture — situations in which multiple AI systems converge on the same output, creating an illusion of independent confirmation.

- DTBP — Dual-Timeline Bridging Protocol: Bridges machine-time and human-deliberation-time, preventing the acceleration pressure that occurs when boards feel they must match the speed of the systems advising them.

- PFF — Proto-Fiduciary Framework: Fiduciary scaffolding for the pre-incorporation period, ensuring founders' governance intentions carry forward into the formal instruments.

- AOSRAP — AI OS Edition Runtime Attestation Protocol: Cryptographically attests that AI systems executed under the correct priority order.

- SBP — Skepticism Boundary Protocol: Prevents weaponized skepticism from paralyzing legitimate human authority through procedural attacks.

- CBRP — Constitutional Bootstrap Recovery Protocol: A documented re-formation path for governance after Membrane breach.

VI. Existing Frameworks, the Regulatory Limit, and What 5QLN Adds

The 5QLN framework does not replace existing governance structures. It compiles on top of them. The NIST AI Risk Management Framework provides process guidance for managing AI risks. ISO/IEC 42001 establishes management system standards for AI. The EU AI Act (Regulation 2024/1689) imposes human oversight, AI literacy, and deployer obligation requirements. SEC enforcement actions — including those against Presto Automation, Nate Inc., and DocGo — signal increasing regulatory attention to AI-related disclosure failures. ISS and Glass Lewis have incorporated AI governance expectations into their 2025-2026 proxy voting policies.

These frameworks are important. They specify what boards should do around AI systems. But none of them produces a structurally verifiable record of where, in any specific decision, substantive human judgment formed. They tell boards to have oversight, to be literate, to manage risk. They do not tell boards how to demonstrate, in a court of law, that the Membrane between human judgment and AI assistance remained intact when a particular decision was made.

There is a deeper reason regulation alone cannot reach the problem. Regulation is a layer on top of organizational behavior — a set of requirements that the regulated entity must meet. A sufficiently capable AI system can produce the surface of regulatory compliance without the substance: the documented human-oversight plan that was never executed, the AI literacy training that was completed without comprehension, the risk assessment that was generated rather than conducted. This is not a criticism of regulators; it is an observation about the structural relationship between regulation and the systems it regulates. Regulation governs what organizations report. It does not govern what happens inside the Membrane, at the point where a human director either exercises judgment or ratifies output. That point — the threshold of decision — is structurally prior to any regulatory requirement, because it is the point at which the requirements either become meaningful or become performance.

5QLN does not replace regulation. It protects the space in which regulation can work. The Three-Tier Record Classification creates a documentary structure that a court can inspect. The Constitutional Block — nine invariant lines that form the constitution-to-statutes relationship — ensures that every provision in the Bylaws can be checked against its governing principle. The paired Bylaws (Human Edition and AI OS Edition), bound by Mirror Consistency, ensure that the framework governing human directors and the framework governing AI systems are the same framework, verifiably so.

VII. What the Court Will See in the Docket

The cases coming to the Court will increasingly present a documentary gap. Plaintiffs in derivative litigation will ask: Who drafted this resolution? Who modeled these alternatives? Who produced the risk analysis that the board relied on? And boards will answer: We do not know. Our general counsel used a research tool. Our strategic planning consultant used an analytics platform. Our outside counsel may have used a drafting assistant. The minutes say we discussed it and voted. We cannot demonstrate that the substantive reasoning was ours.

The Caremark doctrine, as the Court has developed it, provides the standard for evaluating whether a board made a good-faith effort to be informed. What it does not yet provide — because the issue has not yet been squarely presented — is a vocabulary for evaluating whether the information the board received was structurally capable of supporting human judgment, or whether the board's role had been reduced to ratifying outputs it did not understand and could not verify.

The 5QLN framework offers that vocabulary. The Duty of Membrane Integrity provides a doctrinal hook that operates entirely within existing Delaware law. The Triadic Verifiability Typology provides a record-keeping structure that can produce admissible evidence. The Seven Boundary Protocols provide operational specificity. All of it is published. All of it is open-source. None of it is proprietary.

The boards that adopt it will be able to say: our Bylaws required us to preserve the Membrane, we followed that requirement, and here is the record that demonstrates our compliance. That record will include DEFINITE-grade cryptographic attestations, HEURISTIC-grade pattern analysis, and ATTESTATION_REQUIRED-grade human testimony about what happened in the deliberative space that no machine was permitted to surveil.

The boards that cannot say this — the boards that have no Membrane protocol, no verifiability typology, no paired human-machine governance instruments — will face a harder question. They will be asked to prove a negative: to demonstrate, without a structural record, that their decisions were genuinely human. That is a difficult proof to mount. It will become more difficult as AI systems become more deeply embedded in corporate workflow and as plaintiffs' lawyers become more sophisticated in identifying the telltale signatures of AI-generated board materials.

VIII. The Forward-Looking Frame

I offer this letter, and the framework it describes, with appropriate modesty. The 5QLN architecture is early. It has not been tested in litigation. It has not been reviewed by the Court. The question of how the Duty of Membrane Integrity ports to Delaware stock corporations, LLCs, trusts, and other fiduciary contexts will be answered by the boards that choose to adopt it — and by the Court when the right case arrives.

What can be said with confidence is this: the problem is real, it is growing, and it will reach the Court. The Caremark Gap is not a speculative concern. It is the predictable consequence of deploying generative AI systems in governance workflows without a structural theory of how human judgment remains distinct from, and authoritative over, the outputs those systems produce. The frameworks that exist — NIST, ISO 42001, the EU AI Act, the SEC's enforcement posture — are necessary but insufficient. They govern the systems. They do not govern the boundary between the systems and the humans who must exercise fiduciary judgment over them.

The 5QLN Foundation exists to develop, publish, and maintain that boundary structure. The instruments are published at 5qln.com. They are available without restriction.

I am mindful that the Delaware Court of Chancery has built, over two and a half decades, the most respected body of corporate governance jurisprudence in the world. The Caremark doctrine is not merely a set of rules; it is a structural achievement — a way of ensuring that corporations are governed by human beings who take their obligations seriously. The 5QLN framework is offered in that spirit: as a structural supplement, designed to preserve what the Court has built against a form of institutional failure that the Court's existing vocabulary does not yet directly address.

The letter began by honoring the gift the Court has given American corporate governance. It closes with a question — the V.L.9 discipline of the corpus, which requires that no public-facing document end on closure. If a Delaware Certificate of Incorporation can be a compiled constitutional surface in a grammar designed for the human-AI boundary, what becomes possible for Delaware corporate doctrine when the cases of the AI era — and the era after it — begin to arrive in serious volume, and in what register will this Court choose to receive them?

Respectfully,

Amihai Loven

Founder, the 5QLN Foundation (in formation)

Published at 5qln.com · May 2026

References

- In re Caremark International Inc. Derivative Litigation, 698 A.2d 959 (Del. Ch. 1996).

- Stone ex rel. AmSouth Bancorporation v. Ritter, 911 A.2d 362 (Del. 2006).

- Marchand v. Barnhill, 212 A.3d 805 (Del. 2019).

- In re The Boeing Co. Derivative Litigation, 2021 WL 4059934 (Del. Ch. Sept. 7, 2021).

- In re McDonald's Corp. Stockholder Derivative Litigation, 289 A.3d 343 (Del. Ch. 2023).

- Segway Inc. v. Hong Cai, C.A. No. 2022-1110-LWW (Del. Ch. Dec. 14, 2023).

- Smith v. Van Gorkom, 488 A.2d 858 (Del. 1985).

- Bond v. United States, 564 U.S. 211 (2011).

- Mata v. Avianca, Inc., 678 F. Supp. 3d 443 (S.D.N.Y. 2023).

- NIST AI Risk Management Framework (NIST AI 100-1).

- ISO/IEC 42001:2023, Information Technology — Artificial Intelligence — Management System.

- Regulation (EU) 2024/1689 (Artificial Intelligence Act).