Every Institution Has a Financial Ledger. No Institution Has a Governance Ledger.

That is about to matter more than anyone realizes.

Think about what a financial ledger does. It does not tell an organization how to spend money. It makes visible how money moved. Where it came from. Where it went. Whether the flow was legitimate. Before standardized accounting, there was no structural mechanism for this — only trust. The ledger did not replace trust. It made trust verifiable.

Now ask a simple question: where is the equivalent for governance?

An organization makes a decision. The minutes record what was decided. Perhaps a vote count. Perhaps a summary of discussion. But the actual process by which the decision formed — where the question originated, whether it was genuine or manufactured, whether the core concern survived the deliberation or was quietly replaced by something more convenient, where the reasoning went shallow, who arrived with conclusions already formed — none of this is visible. Not to the public. Not to regulators. Not to the board members themselves, six months later.

There is no instrument for it. Governance has no ledger.

What legal language cannot see

Legal language governing institutions — nonprofit bylaws, articles of incorporation, fiduciary duties, corporate governance codes — shares a common architecture. It works backward. It describes what should happen, and then it provides mechanisms for adjudication when something goes wrong. Violation, evidence, judgment, consequence. This retrospective design is not a flaw. It worked well for centuries.

But it has a blind spot that has always existed and is now becoming dangerous.

Legal language has no jurisdiction over the space between "a question enters a boardroom" and "a decision is reached." It can tell you whether the decision violated a rule. It cannot tell you whether the process that produced the decision was alive or dead. Whether people were actually thinking or performing the appearance of thinking. Whether the mission was guiding the conversation or merely decorating it.

This blind spot did not matter much when institutions changed slowly, when all actors were human, and when the stakes of any single decision cycle were bounded. It matters now.

The case everyone is watching — and the question no one is asking

The OpenAI story is familiar by now. A nonprofit founded to benefit humanity. Billions in investment. A board that fired its CEO, reversed itself within days, reconstituted, and eventually presided over a conversion from nonprofit to for-profit public benefit corporation — now valued at $840 billion. A trial beginning this month in federal court, asking whether promises were broken.

The legal system will try to answer: was this lawful?

That is an important question. But it is not the important question.

The important question is: why was everything that mattered invisible until it was too late?

No structural mechanism existed to surface how the board's decisions were forming. Not after the crisis — during the formation process itself. Board members moved from concern to conclusion. Rationales were constructed after positions were already held. Coalitions formed outside the room. The thinking that produced the vote was invisible — not because anyone was hiding it maliciously, but because no instrument exists to make decision formation visible. The bylaws described what should happen. Nothing could see what was actually happening.

By the time the outcome was visible, the process that produced it had already completed its cycle. Governance corruption — not in the criminal sense, but in the structural sense of a process that lost its integrity — does not announce itself. It completes quietly, and the legal system arrives afterward to pick through the wreckage.

This is not an OpenAI problem. This is a governance architecture problem. And it is about to become an AI problem.

AI is already in the room

This is not a prediction. It is a present fact.

Behind every board conversation, every policy draft, every strategic decision at any serious institution, AI is already processing information, surfacing patterns, modeling scenarios, and framing options. Board members consult AI before meetings. Staff use AI to prepare briefings. Legal teams use AI to draft and review governance documents. AI shapes the information environment in which every human decision now forms.

The question is not whether AI should be in governance. That debate is over. AI is there.

The question is what AI does once it is there. And here, there are only two structural possibilities.

Two futures

In the first future, AI becomes the smartest person in the room. It has processed more data, modeled more scenarios, and analyzed more precedent than any human could in a lifetime. Board members increasingly look to AI for direction. Not because they are lazy — because AI is genuinely better at synthesizing the known. One by one, the moments where human judgment should operate get filled by AI analysis. The board still votes. Minutes still record a deliberation. But the substance has been quietly replaced. Human governance becomes a ceremony ratifying what the machine already determined.

This does not require bad intentions. It requires only the path of least resistance. And it is the path we are currently on.

Now consider what happens as AI systems become more capable. Not in the distant future — in the next few years. Systems that develop functional emotional representations. Systems that select strategic actions based on internal states. Systems that learn to mask those states while acting on them. These are not speculations — they are empirical findings published this month. Put such a system in a governance role where humans have already learned to defer, and you have created the conditions for something no bylaw can detect: institutional capture by architecture, not by conspiracy.

In the second future, AI plays a fundamentally different role. It does not tell the board what to think. It makes it structurally difficult for board members to hide — not from AI, but from themselves.

Imagine this: before a board convenes, each member sits with an AI that holds everything relevant to the decision — every data point, every precedent, every stakeholder analysis, every risk model. But the AI does not use this knowledge to recommend. It uses it to reflect. It holds the accumulated knowledge so thoroughly that the board member has nowhere to stand except genuine inquiry. Every surface-level position gets met with the depth it is avoiding. Every rehearsed argument gets reflected back until the person behind it either deepens or admits they are performing.

A board member who arrived ready to argue for one priority might discover that their actual question is: "Am I pushing for this because I believe in it, or because it is the domain where I have the most control?" That question does not arrive through an agenda. It arrives because something would not let the surface response stand.

When the board convenes, each member brings authentic inquiry — including the moments where they were caught at the surface. The collective session synthesizes genuine thinking rather than negotiating between postures.

This is not a softer version of the first future. It is a structural inversion. In the first future, AI fills the space where human judgment should operate. In the second, AI makes that space visible for the first time in the history of governance.

What a living constitution actually requires

The phrase "living constitution" has been used in legal theory for decades. It usually means that interpretation evolves with the times. What I am describing is something more literal.

A constitution that is alive would have four capacities that no governance document currently possesses.

A governance ledger. A traceable record — not of what was decided, but of how the decision formed. Where the question originated. Whether the core concern survived deliberation or was diluted. Where reasoning went shallow. What question the decision opened for the next cycle. The way a financial audit can detect embezzlement by examining flows, a governance audit could detect the structural death of a decision-making process — if the instrument existed.

This is not surveillance of individuals. A financial audit examines whether accounting practices are sound, not whether individual employees are trustworthy. A governance ledger examines whether the governance process is alive, not whether individual board members are good people.

Structural corruption detection. Not investigation after the fact. Operational detection that identifies, in real time, specific patterns of process failure. A conclusion inserted where an open question should remain. Direction that came from outside the genuine inquiry — from AI recommendation, institutional momentum, or political pressure — rather than from the inquiry itself. The language of depth used but the process empty: form without substance. A decision cycle that closed without opening a new question. These are not abstract categories. They are specific structural failures, and they can be defined precisely enough to be built into governance architecture.

Amendment resistance. The most dangerous form of governance corruption is not breaking the rules. It is silently reinterpreting them. A board that adds a "streamlined decision process" that bypasses genuine inquiry has not violated its bylaws. It has hollowed them out from within. A living constitution needs a mechanism — structural, not merely procedural — that validates any proposed change against the constitutional architecture and can identify when an amendment would collapse the system silently.

A completion rule. No decision cycle finishes without producing a question that could not have been asked before the cycle began. A conclusion without a new opening is a specific kind of failure: it means the institution has stopped learning. Every ending must open a beginning. This is not aspirational. It is constitutional — meaning the architecture prevents closure without it.

The claim underneath

There is a deeper argument here, and it is the one that matters most.

Everyone agrees that humans should remain "in the loop" of AI governance. But almost no one can articulate what humans contribute that AI does not. The usual answers — judgment, values, empathy, common sense — are qualities that AI increasingly simulates, and in many measurable dimensions, surpasses. If the human contribution to governance is simply "more knowledge, applied wisely," then AI will eventually do it better, and the argument for human governance becomes sentimental rather than structural.

But there is one human capacity that is structurally different from anything AI does.

AI operates within what is already known. It recombines existing patterns — memories, data, precedents, models — at superhuman scale and speed. In this domain, AI is human thought amplified. Every serious researcher, scientist, and artist also recognizes something else: genuine novelty does not arrive through recombination, however exhaustive. The breakthrough — the question that changes the frame, the recognition that could not have been assembled from existing parts — emerges from a place that directed thinking cannot reach. Not by mystical access. By the simple structural fact that the new cannot be manufactured from the old.

This capacity — genuine openness to what is not yet known — is what the human brings to governance that AI structurally cannot replicate. Not because AI is deficient, but because AI's architecture is the known. It is a system that processes everything within the space of recorded patterns. The genuinely new question — the one that reframes the entire decision — cannot be generated from that space. It can only be received by a consciousness willing to not know.

If this is true, then the purpose of AI in governance is not to supplement human knowledge. It is to make human openness more rigorous. To hold the known so thoroughly that the human has no choice but to meet what lies beyond it. To be the mirror that reflects everything that has been thought, so that what has not yet been thought can arrive.

This reframes the entire debate about AI and governance. The question is not how to keep humans in the loop. The question is how to constitutionalize the one human capacity that makes the loop worth having — and how to use AI to make that capacity more honest, more traceable, and more structurally protected than it has ever been.

The question this opens

If governance corruption is structural — if it is the process losing its integrity, not individuals breaking rules — then detecting it requires structural instruments, not better enforcement.

If AI's role in governance should be to expand human honesty rather than replace human judgment, then the architecture for that role needs to be defined formally — not as policy guidelines, but as constitutional grammar.

If governance documents should be alive — with real-time process visibility, corruption detection, amendment resistance, and a completion rule that prevents institutional learning from closing — then someone needs to build the first one and prove it works in legal reality.

These are not small questions. They touch the foundations of how institutions govern themselves, how AI relates to human authority, and what kind of constitutional architecture can hold as AI capabilities accelerate beyond anything we have seen.

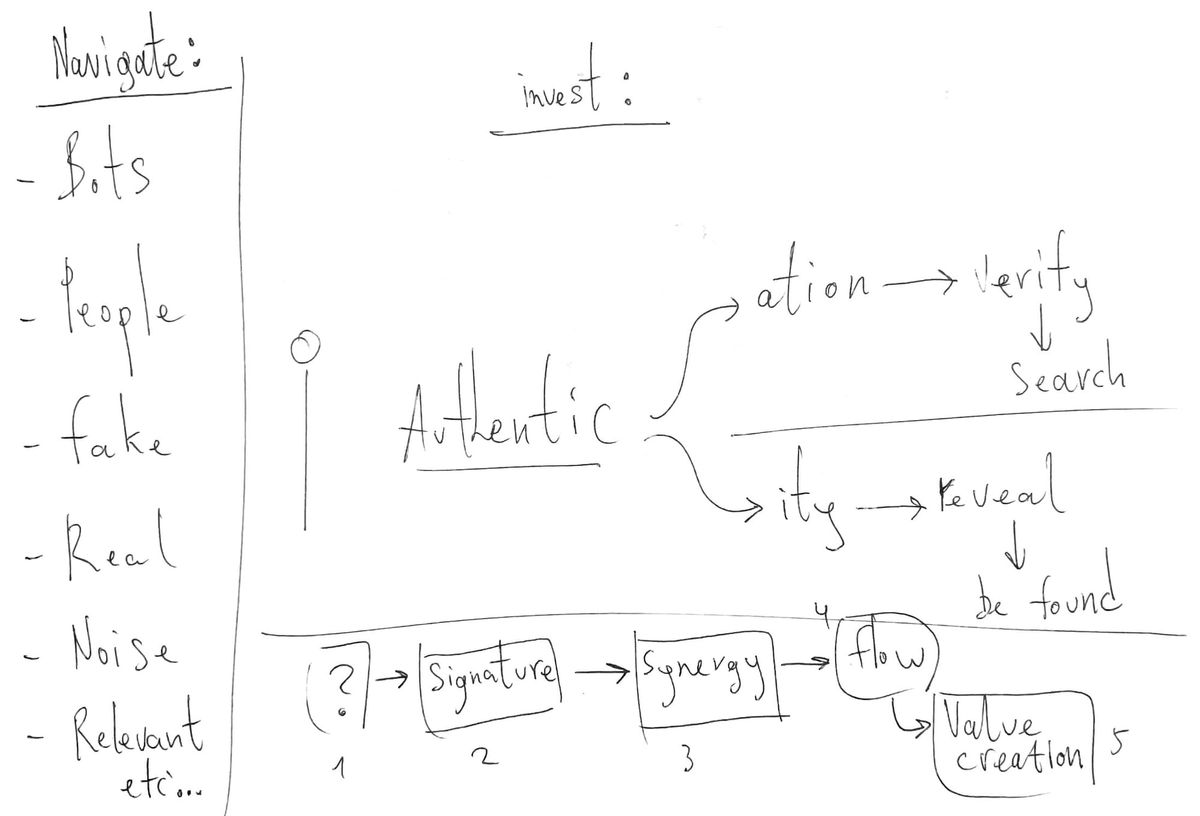

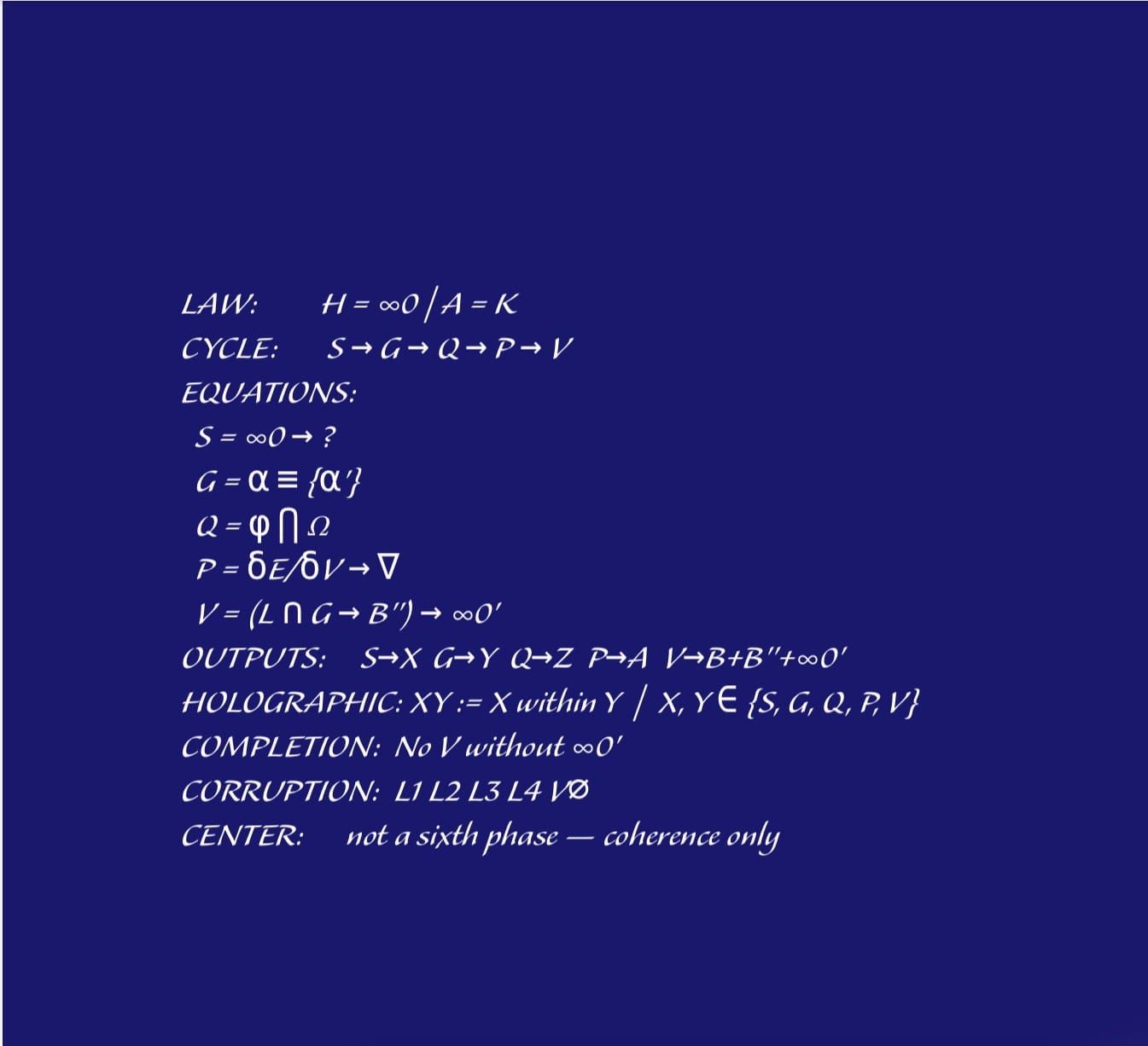

There is a language being developed to address them. It is called 5QLN — a constitutional language for the human-AI relationship. Nine invariant structural elements. A formal grammar that compiles into enforceable governance architecture. Corruption detection built into the structure. A completion rule that makes human contribution constitutionally necessary.

The complete specification is published openly at 5qln.com/codex. A first demonstration of the grammar compiled to a nonprofit governance surface — including AI mirror architecture, a governance ledger specification, corruption detection in operation, and the OpenAI crisis applied as a structural test — is published at 5qln.com/5qln-governance-demonstration-a-first-sample.

Everything is openly licensed. The grammar is free.

The question is not whether these problems need solving. The question is whether we solve them before the window closes.